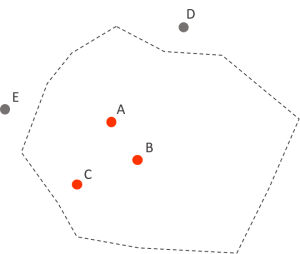

Point-in-polygon is a textbook problem in geographical analysis: given a list of geocoordinates return those that fall within a boundary of an area. You could feed the algorithm a list of cities across the globe and it will recognise which of them belong to Sri Lanka and which to a completely random shape you drew on planet Earth. It applies to many scenarios: analyses that aren’t based on administrative boundaries, situations in which polygons change over time, or problems that aren’t geographical at all, like computer graphics. Not so long ago, I turned to point-in-polygon to generate a set of towns and villages to plot on a map of Poland from 1933. Such list has not been made available on the web and I wasn’t super keen on typing out thousands of locations. Instead, I used that mathematical cookie-cutter to extract only those locations from today’s Poland, Ukraine, Belarus, and Russia that were present within the interwar Poland boundaries. In this post I will show how to perform a point-in-polygon analysis in R and possibly automate a significant chunk of data preparation for map visualisations.

The other day I was preparing a map of Europe that I wanted to populate with a handful of prominent cities. How does one decide which cities are worth plotting? Are locations over half a million citizens prominent enough for my use case? Even with such high threshold I couldn’t get myself to manually prepare the list: any set of over 10 items seems like something worth automating. That being the case, I opened R and wrote a point-in-polygon procedure for this and future uses.

To follow my example you need a base map and some geographical locations. If your points are missing their geocoordinates, you can look them up using one of the geocoding tools or APIs on the web, for example Map Developers. Alternatively, you can use Geonames, a geographical database with more than 10M locations, freely distributed under a Creative Commons licence. Here I chose their “lightweight” pack of 24 000 locations, each inhabited by more than 15 000 people. That many data points would flood my map, so I will filter it to cities with over 1M people – with the exception of 250 000 for Spain (my focus country). Then I will use the point-in-polygon query to remove all locations that fell outside the borders of my map. The whole procedure will be done in R. The script is not particularly complicated but it involves some operations on spatial objects and coordinates systems that can be tricky for beginners.

Open up R Studio. You need to install and load 4 packages: sp, rgdal, maps, and dplyr:

library(sp)

library(rgdal)

library(maps)

library(dplyr)

Let’s load both datasets we’ll be working with: the base map and the list of 24 000 locations from Geonames:

countries <- readOGR(dsn="output.geojson")

cities15000 <- read.delim("cities15000/cities15000.txt", header=FALSE, sep="\t")

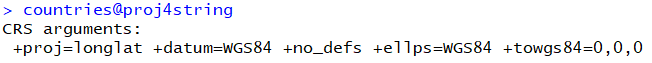

First, we need to check our map’s coordinate system. Both the map and the points need to have one assigned for point in polygon to work. You can check what’s their current CRS with the function proj4string.

countries@proj4string

My map’s CRS was correctly recognised as WGS84. You might find that your base map doesn’t have any projection assigned or you need to change it to conform to your locations’ spatial reference system (or vice versa). Use the CRS function to assign a projection, or sptransform to transform it to another one. OGR2OGR utility can also come handy as we saw in my previous post.

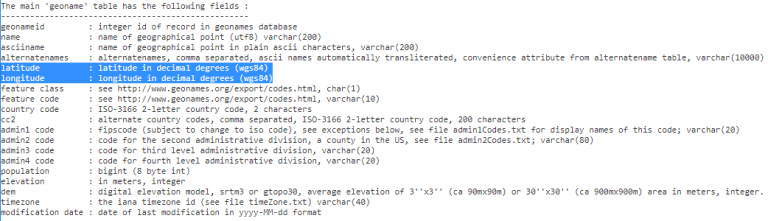

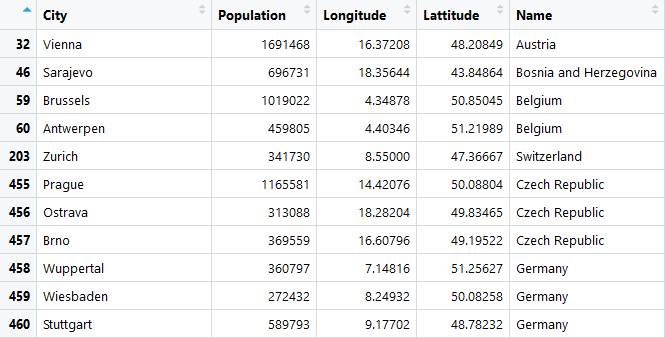

Now that the base map is ready, it’s time to clean up and filter my locations. The Geonames dataset includes columns I don’t necessarily need: I will reduce it to name, population size, latitude, and longitude. Then I’ll assign more telling column names to the set, and finally cut it down to locations of over 250 000 inhabitants.

cities <- subset(cities15000,select=c("V3","V15","V5","V6"))

names(cities) <- c("City","Population","Lattitude","Longitude")

cities <- filter(cities, Population >= 250000)

nrow(cities)

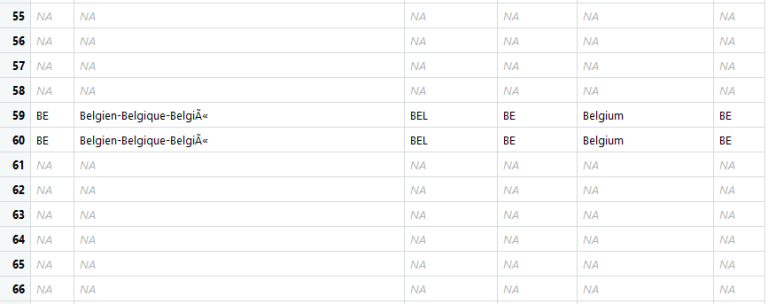

I got back 1609 locations. I couldn’t work the dataset further to remove all non-Spanish metropolies of under 1M inhabitants because there is no way to tell which point belong to which country in my dataset. I will have this information after running the point-in-polygon – yet another cool feature.

It’s time to assign my locations a coordinate system. I will first turn the data frame to a spatial points data frame with the coordinates function. Further transformation with as is required to turn the frame into a spatial points object – required by the point in polygon algorithm.

coordinates(cities) <- c("Longitude","Lattitude")

as(cities,"SpatialPoints")

Once we get the dataset in the correct geographical format, we can give it a coordinate system. CRS information can often be found in the dataset’s metadata – and so is the case of the Geonames’ products.

We assign the projection with the CRS command and tell R that our cities and countries follow the same coordinate system.

proj4string(cities) <- CRS("+proj=longlat +datum=WGS84")

proj4string(cities) <- proj4string(countries)

With that prepared data set we can finally run the point-in-polygon algorithm! It’s as simple as calling the over function on cities and countries:

pointsinpoly <- over(cities,countries)

You will notice many empty rows in the resulting dataset. These are the locations that weren’t found within the map boundaries.

As the last step, let’s remove the rows and append our findings to the city data frame.

output <- na.omit(cbind(cities@data,cities@coords,pointsinpoly$NAME_ENGL))

nrow(output)

colnames(output)[5] <- 'Name'

The dataset is ready:

And it’s looking good! Now every time I have to cut down my location set I have a working program to do it! I guess now I can finally create that map I announced 4 posts ago… Or can I? Stay tuned!

Follow @EveTheAnalyst